Whose version is it anyway?

with tags versioning systems abstraction opinion -Upgrading software is a common part of our lives. We bemoan upgrades of apps on phones that change features we had just come to enjoy. We wait for upgrades of applications on our laptops to finish installing. We dread operating systems upgrades that might break things. We gloss over notifications of upgrades to online services. And we joke about upgrades in the more corporeal aspects of our lives. All the while wondering and debating as to when the next new feature will be included in upcoming upgrades.

Behind every upgrade there is a version number. Somewhere somehow someone is making sure that they can keep track of which version was running before and after the upgrade.

The problem is, when it comes to actually overseeing the software elements behind the scenes it quickly becomes a point of debate and general consternation. How should we track versions? What should we version? Who should control the versions? etc.

There are debates around:

- should we use semantic version numbers?

- should we include the build number in the version number?

- should we just include the Git commit ID and leave it at that?

- should we base the deployment pipeline on the semantic version or the commit ID?

- should we link the version number to the release date?

But… maybe, if we step back, we can see that a lot of the trouble around versions might just dissolve when we realise that there is more than one thing that needs to be versioned and that those “things” generally belong to different owners and are often quite independent of each other.

Think of it this way:

When stuff is upgraded, what exactly is being upgraded and who is responsible for the upgrade?

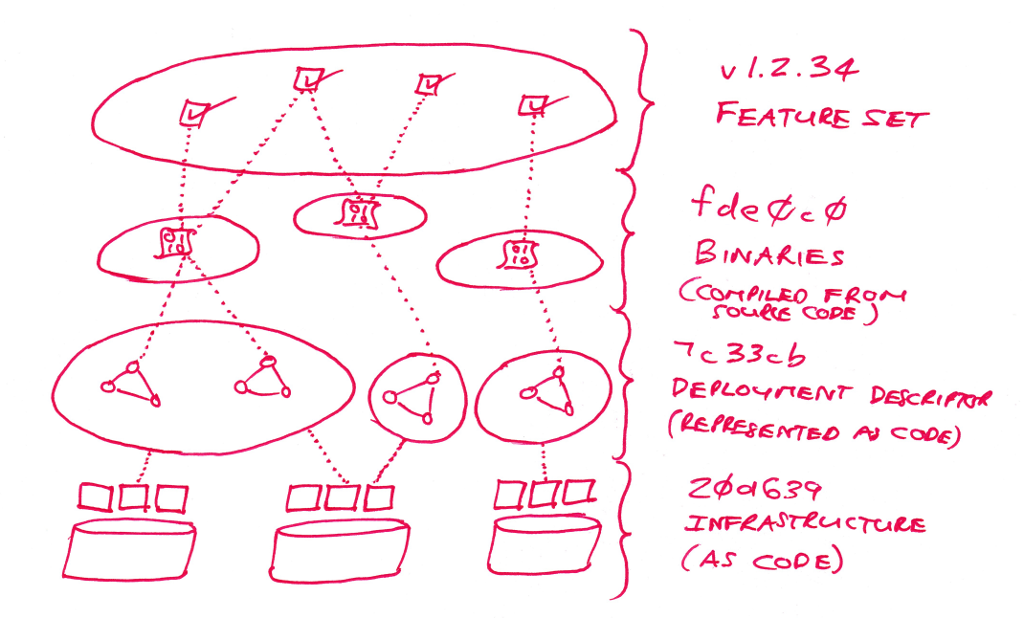

It turns out that for any given software system there are multiple equally valid, but distinct, points-of-view of the system being upgraded:

- for the software developers there is a code base that represents the exact code that was used to produce the binaries running behind the scenes and performing useful computations.

- for the deployment team (dev ops, sys admins, site reliability engineers) there is an exact configuration set that defines the current environment for the running system that may include the collection of binaries and the current parameters.

- for the product team there is the exact feature set that represents the business interests and describes what their user base can expect to find when using the system.

- for the infrastructure team there is an exact representation of the currently deployed set of infrastructure elements that are then used to host the software systems.

The real problems arises when these different points-of-view are overloaded, confused, entangled or missing.

In contrast, if we simply embrace the fact that a single system can, and should, have many “different versions” then the whole process of managing the system’s life-cycle becomes much simpler and less contrived.

To see how this plays out let’s consider a few different system upgrades and look at what is versioned, and by whom.

We will start with the software developer. From their point-of-view its all about the code. In particular it is about the code base that they maintain in order to compile new binaries. As such, the software developer wants the version numbers to lead from running binaries, back to the specific code that was complied in order to create those binaries. For them it often suffices to simply tag the binaries with the version control commit ID. The upgrade should, therefore, contain references to the code version of the binaries. If the binaries are produced by a mono-repo then this is actually quite easy because all code versions, linked to binaries, relate back to a single code repository.

With some binaries in hand we now consider the upgrade from the point-of-view of the deployment team. Here the actual inner workings of the binaries are abstracted away. Rather the deployment team intends to treat the binaries as black boxes. The reality is that these are slightly porous boxes with a few parameters that need to be tweaked here and there. So the deployment team keeps track of the versions of the binaries, along with some configuration information that is needed to parameterise the running of the binaries, and along with the overall deployment structure that includes the exact collection of binaries together with replication levels or resource allocations. Again, the deployment team knows the importance of keeping tabs on what exactly is in flight, so they associate a version with the deployment descriptor.

All of this stuff has to run somewhere. There needs to be infrastructure for the system to leverage and consume. But, this too is changeable. The infrastructure team may roll out new clusters, or change storage replication levels or control network configurations. From the point-of-view of the infrastructure team the entire deployment is a black box. They are happy to abstract away all of the inner workings of the binaries as well as all of the affects of application configuration and the interconnections between components. However, they too need to have a record of what is in or out of play. For this, the infrastructure team resorts to “infrastructure as code” and maintains a version identifier for the current footprint.

Now, there is still the business itself to consider. The business renders services for customers in order to fit the bills of all of the above layers and turn a profit. And the business also maintains a view of the system that needs to be tracked and changed - the feature set. The business provides products with particular a feature set that they can then position and sell. This might include new features that help to retain market share, or this may include specific features that can be offered to premium paying customers.

However, it is at this point that many teams may feel that there are already enough versioning systems hanging around. So the temptation is to just borrow one of those. For a long time the obvious victim was for the business to borrow the software developers binary version identifier. With the increase in distributed computing deployments, it now becomes tempting for business to switch to piggy-backing on the deployment version.

The reality is that the “feature set” is its own thing. The feature set that is available is a system abstraction that should be distinct from the deployment descriptor version and from the binary version(s), and clearly separate from the infrastructural footprint version.

To see this, we only have to consider a system bug fix. In particular, let’s consider a resource exhaustion bug. The type of bug that chews up system RAM, triggers CPU thrashing and finally results in system failure. In order to track down and resolve particular recalcitrant versions of these bugs there may need to be a coordinated (or at least correlated) effort between developer teams, deployment teams and infrastructure teams. The infrastructure team may need to provision additional large nodes with higher RAM in order to reduce the frequency of failures. The deployment team may need to increase the replica counts and change rollout strategies in order to reduce the impact of restarts. The development team may need to prepare a few different binaries in order to first understand the bug and then to fix the issue. Finally the deployment teams and infrastructure teams would need to undo their over provisioning that was introduced as a stop-gap measure.

All the while, it should be clear that from the business’ point-of-view the feature set has not changed. Therefore, the version identifier that indicates what customers should be able to do with the system should remain constant.

That is, it should be clear that the business owners should ensure that they version the product level feature set in a manner that is quite distinct from that of the lower layers of system abstraction.

With the different system abstractions being versioned separately, it becomes possible to consider more complex change scenarios. For example, the resource bug may take many weeks to really pin down and resolve. All the while, the business needs to go on; business will need to release other features in line with product road maps. Or, business might need to orchestrate slow launches of features to different market segments or to different user cohorts. For this, the product team might make use of control surfaces implemented within the software applications specifically to allow for the exposure of features and code paths to the correct user groups. All the while the business product team would keep track of version identifiers describing the release status of the feature sets.

Software systems consist of many moving parts that can be considered and managed at varying layers of abstraction. Separating out these layers of abstraction allows for the specialisation of skills and independence in the variability of different aspects of the system.

In order to internalise and facilitate the layers of abstraction it is important to identify whose point-of-view is under consideration, and to enable individual ownership of these different points-of-view of the system through the use of distinct version identifiers.